reader comments

reader comments

51 with 38 posters participating, including story author

Every day, some little piece of logic constructed by very specific bits of artificial intelligence technology makes decisions that affect how you experience the world. It could be the ads that get served up to you on social media or shopping sites, or the facial recognition that unlocks your phone, or the directions you take to get to wherever you’re going. These discreet, unseen decisions are being made largely by algorithms created by machine learning (ML), a segment of artificial intelligence technology that is trained to identify correlation between sets of data and their outcomes. We’ve been hearing in movies and TV for years that computers control the world, but we’ve finally reached the point where the machines are making real autonomous decisions about stuff. Welcome to the future, I guess.

In my days as a staffer at Ars, I wrote no small amount about artificial intelligence and machine learning. I talked with data scientists who were building predictive analytic systems based on terabytes of telemetry from complex systems, and I babbled with developers trying to build systems that can defend networks against attacks—or, in certain circumstances, actually stage those attacks. I’ve also poked at the edges of the technology myself, using code and hardware to plug various things into AI programming interfaces (sometimes with horror-inducing results, as demonstrated by Bearlexa).

Many of the problems to which ML can be applied are tasks whose conditions are obvious to humans. That’s because we’re trained to notice those problems through observation—which cat is more floofy or at what time of day traffic gets the most congested. Other ML-appropriate problems could be solved by humans as well given enough raw data—if humans had a perfect memory, perfect eyesight, and an innate grasp of statistical modeling, that is.

But machines can do these tasks much faster because they don’t have human limitations. And ML allows them to do these tasks without humans having to program out the specific math involved. Instead, an ML system can learn (or at least “learn”) from the data given to it, creating a problem-solving model itself.

This bootstrappy strength can also be a weakness, however. Understanding how the ML system arrived at its decision process is usually impossible once the ML algorithm is built (despite ongoing work to create explainable ML). And the quality of the results depends a great deal on the quality and the quantity of the data. ML can only answer questions that are discernible from the data itself. Bad data or insufficient data yields inaccurate models and bad machine learning.

Beth Mole, please report to collect your award.)

And headline writing is hard! It’s a task with lots of constraints—length being the biggest (Ars headlines are limited to 70 characters), but nowhere near the only one. It is a challenge to cram into a small space enough information to accurately and adequately tease a story, while also including all the things you have to put into a headline (the traditional “who, what, where, when, why, and how many” collection of facts). Some of the elements are dynamic—a “who” or a “what” with a particularly long name that eats up the character count can really throw a wrench into things.

Plus, we know from experience that Ars readers do not like clickbait and will fill up the comments section with derision when they think they see it. We also know that there are some things that people will click on without fail. And we also know that regardless of the topic, some headlines result in more people clicking on them than others. (Is this clickbait? There’s a philosophical argument there, but the primary thing that separates “a headline everyone wants to click on” from “clickbait” is the headline’s honesty—does the story beneath the headline fully deliver on the headline’s promise?)

Regardless, we know that some headlines are more effective than others because we do A/B testing of headlines. Every Ars article starts with two possible headlines assigned to it, and then the site presents both alternatives on the home page for a short period to see which one pulls in more traffic.

There have been a few studies done by data scientists with much more experience in data modeling and machine learning that have looked into what distinguishes “clickbait” headlines (ones designed strictly for getting large numbers of people to click through to an article) from “good” headlines (ones that actually summarize the articles behind them effectively and don’t make you write lengthy complaints about the headlines on Twitter or in the comments). But these studies have been focused on understanding the content of the headlines rather than how many actual clicks they get.

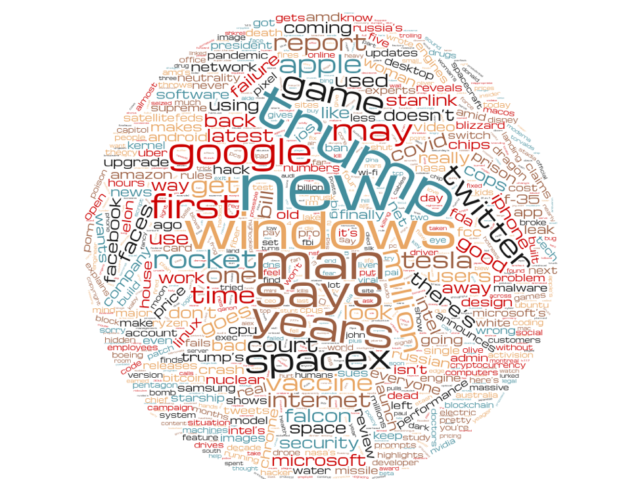

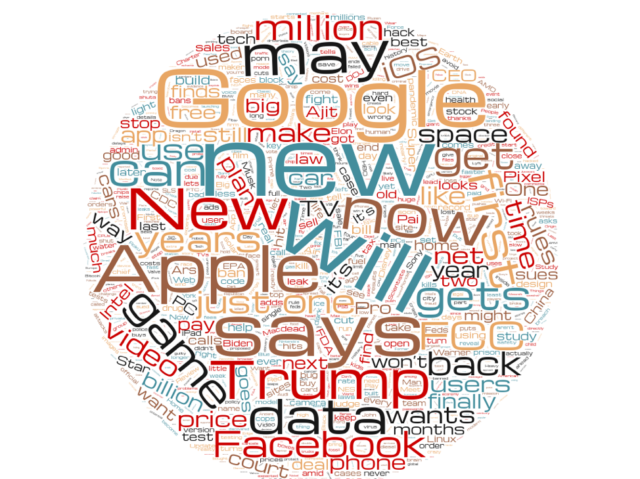

There is a whole lot of Trump in there—the last few years have included a lot of tech news involving the administration, so it’s probably inevitable. But these are just the words from some of the winning headlines. I wanted to get a sense of what the difference between winning and losing headlines were. So I again took the corpus of all Ars headline pairs and split them between winners and losers. These are the winners:

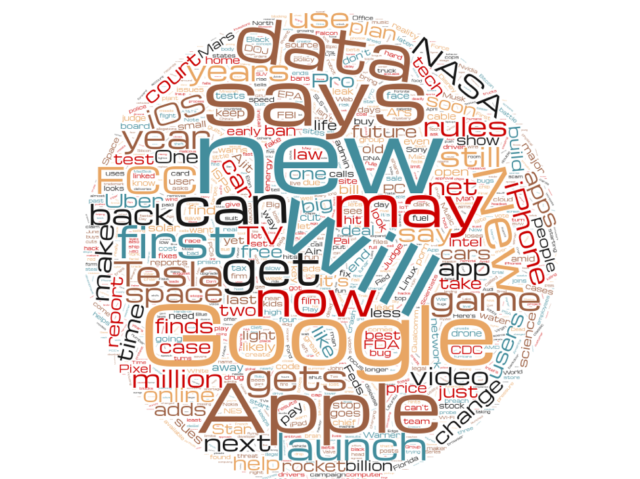

And here are the losers:

Remember that these headlines were written for the exact same stories as the winning headlines were. And for the most part, they use the same words—with some notable differences. There’s a whole lot less “Trump” in the losing headlines. “Million” is heavily favored in winning headlines, but somewhat less so in losing ones. And the word “may”—a pretty indecisive headline word—is found more frequently in losing headlines than winning ones.

This is interesting information, but it doesn’t in itself help predict whether a headline for any given story will be successful. Would it be possible to use ML to predict whether a headline would get more or fewer clicks? Could we use the accumulated wisdom of Ars readers to make a black box that could predict which headlines would be more successful?

Hell if I know, but we’re going to try.

All this brings us to where we are now: Ars has given me data on over 5,500 headline tests over the past four years—11,000 headlines, each with their rate of click-throughs. My mission is to build a machine learning model that can calculate what makes a good Ars headline. And by “good,” I mean one that appeals to you, dear Ars reader. To accomplish this, I have been given a small budget for Amazon Web Services compute resources and a month of nights and weekends (I have a day job, after all). No problem, right?

Before I started hunting Stack Exchange and various Git sites for magical solutions, however, I wanted to ground myself in what’s possible with ML and look at what more talented people than I have already done with it. This research is as much of a roadmap for potential solutions as it is a source of inspiration.