Like much of the internet, PubPeer is the sort of place where you might want to be anonymous. There, under randomly assigned taxonomic names like Actinopolyspora biskrensis (a bacterium) and Hoya camphorifolia (a flowering plant), “sleuths” meticulously document mistakes in the scientific literature. Though they write about all sorts of errors, from bungled statistics to nonsensical methodology, their collective expertise is in manipulated images: clouds of protein that show suspiciously crisp edges, or identical arrangements of cells in two supposedly distinct experiments. Sometimes, these irregularities mean nothing more than that a researcher tried to beautify a figure before submitting it to a journal. But they nevertheless raise red flags.

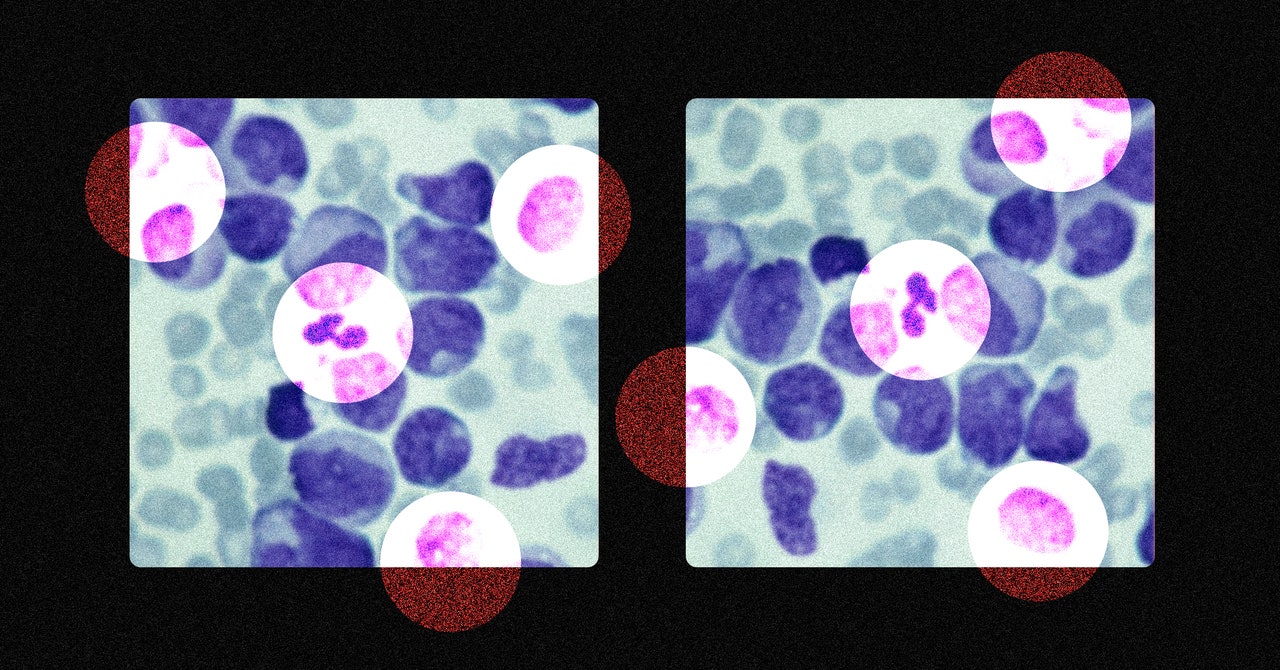

PubPeer’s rarefied community of scientific detectives has produced an unlikely celebrity: Elisabeth Bik, who uses her uncanny acuity to spot image duplications that would be invisible to practically any other observer. Such duplications can allow scientists to conjure results out of thin air by Frankensteining parts of many images together or to claim that one image represents two separate experiments that produced similar results. But even Bik’s preternatural eye has limitations: It’s possible to fake experiments without actually using the same image twice. “If there’s a little overlap between the two photos, I can nail you,” she says. “But if you move the sample a little farther, there’s no overlap for me to find.” When the world’s most visible expert can’t always identify fraud, combating it—or even studying it—might seem an impossibility.

Nevertheless, good scientific practices can effectively reduce the impact of fraud—that is, outright fakery—on science, whether or not it is ever discovered. Fraud “cannot be excluded from science, just like we cannot exclude murder in our society,” says Marcel van Assen, a principal investigator in the Meta-Research Center at the Tillburg School of Social and Behavioral Sciences. But as researchers and advocates continue to push science to be more open and impartial, he says, fraud “will be less prevalent in the future.”

Alongside sleuths like Bik, “metascientists” like van Assen are the world’s fraud experts. These researchers systematically track the scientific literature in an effort to ensure it is as accurate and robust as possible. Metascience has existed in its current incarnation since 2005, when John Ioannidis—a once-lauded Stanford University professor who has recently fallen into disrepute for his views on the Covid-19 pandemic, such as a fierce opposition to lockdowns—published a paper with the provocative title “Why Most Published Research Findings Are False.” Small sample sizes and bias, Ioannidis argued, mean that incorrect conclusions often end up in the literature, and those errors are too rarely discovered, because scientists would much rather further their own research agendas than try to replicate the work of colleagues. Since that paper, metascientists have honed their techniques for studying bias, a term that covers everything from so-called “questionable research practices”—failing to publish negative results or applying statistical tests over and over again until you find something interesting, for example—to outright data fabrication or falsification.

They take the pulse of this bias by looking not at individual studies but at overall patterns in the literature. When smaller studies on a particular topic tend to show more dramatic results than larger studies, for example, that can be an indicator of bias. Smaller studies are more variable, so some of them will end up being dramatic by chance—and in a world where dramatic results are favored, those studies will get published more often. Other approaches involve looking at p-values, numbers that indicate whether a given result is statistically significant or not. If, across the literature on a given research question, too many p-values seem significant, and too few are not, then scientists may be using questionable approaches to try to make their results seem more meaningful.

But those patterns don’t indicate how much of that bias is attributable to fraud rather than dishonest data analysis or innocent errors. There’s a sense in which fraud is intrinsically unmeasurable, says Jennifer Byrne, a professor of molecular oncology at the University of Sydney who has worked to identify potentially fraudulent papers in cancer literature. “Fraud is about intent. It’s a psychological state of mind,” she says. “How do you infer a state of mind and intent from a published paper?”